Kuberay and Model Deployment

Vendor Information

Kuberay is open-source and anyscale owns the open-source product. Anyscale, is an unified compute platform that makes it easy to develop, deploy, and manage scalable AI and Python applications using Ray.

License Information

The Kuberay application itself is open-source.

Business Purpose

The Kuberay platform is used for machine learning model deployment.

Usage Information

The Kuberay platform is intended for model deployment with a limited group of people. The platform was intially tested for deploying DL model. We tested LLM as well, it is able to deploy but have issues on handle huge traffic.

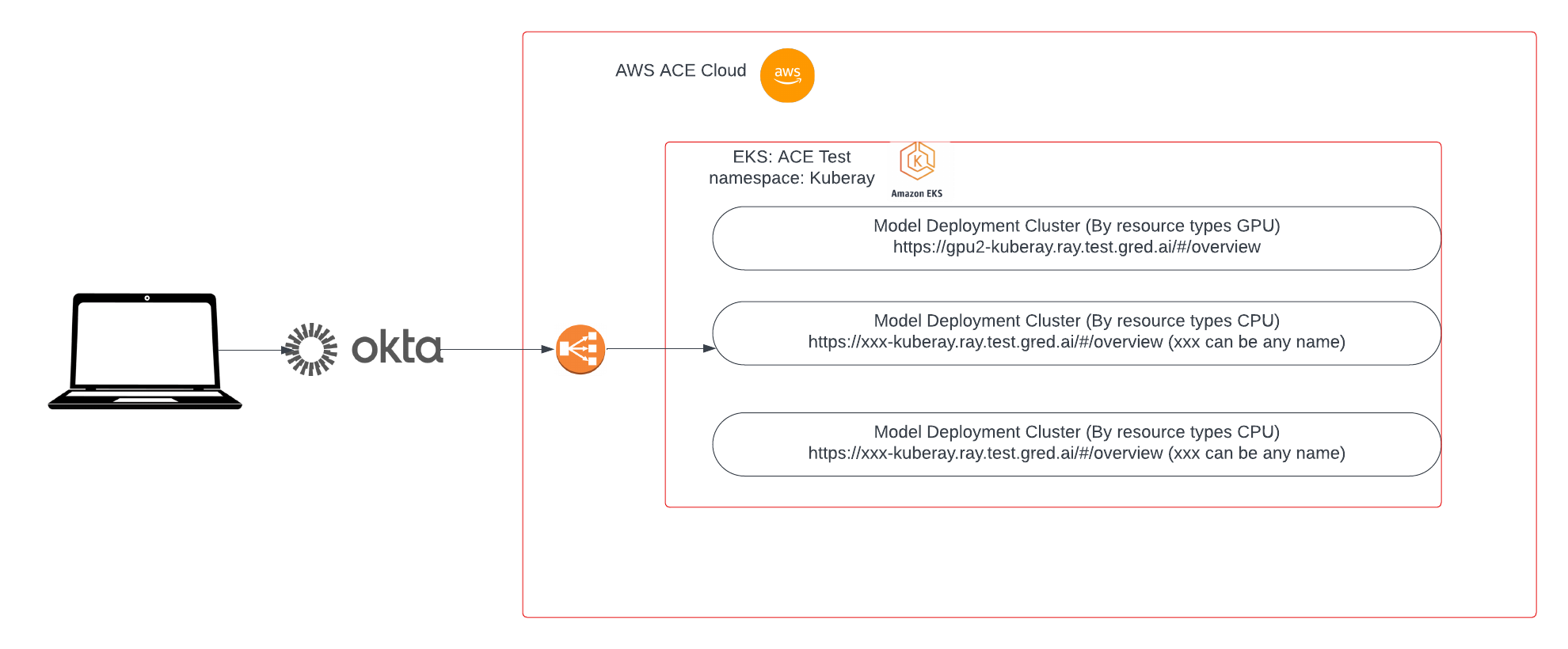

Users must login in through okta validation.

:warning: Depoly LLMs is expensive. TODO cost optimization

Deployment Model

This application is deployed under ace-test eks cluster and the Kuberay is open source provided by Anyscale.

- All the deployments are managed by us, same as other EKS applications, okta + ALB

- The EKS deployment configurations (image, GPU, CPU, etc) are managed by Helm chart

- User groups are managed by Okta.

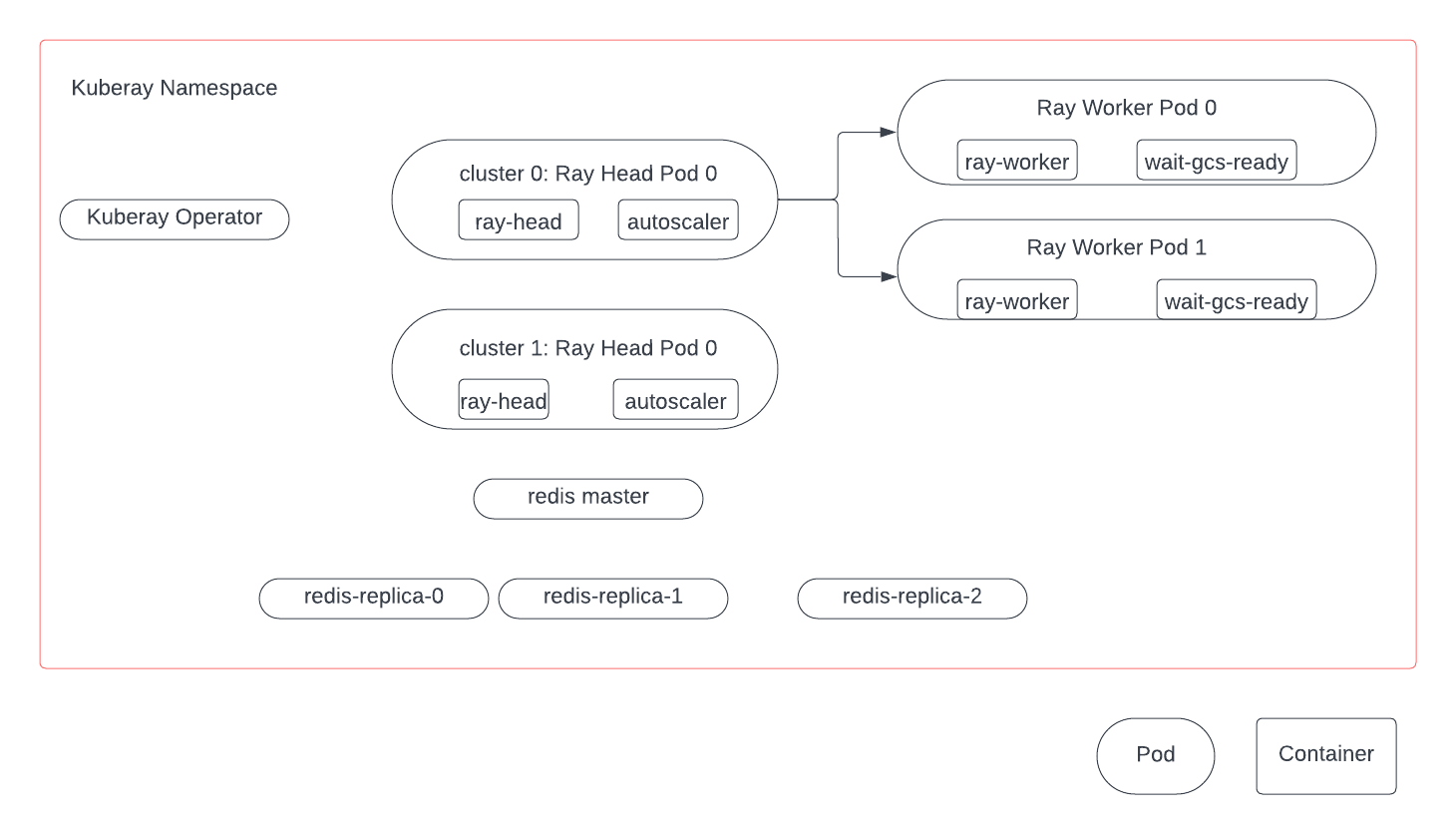

- One master and three replica redis pods are deployed in the same namespace to make sure the Fault tolerance.

High Level Design

The first diagram explains the overall archtecture for Kuberay.  In side the

In side the ace-test/kuberay namespace:

4 kind of pods:

- Kuberay operator: control ray cluster deployment

- Ray Head: schedule worker pods

- Ray Worker: running Ray applications

- Redis pods: ensure fault tolerance

Components

AWS Components

Github Components

Check out the repository

Support Information

The ML Engineering team is the owner of the project in ACE:

- Email: Mahdi Abbaspour Tehrani

How to report issues: TBD

All infrastructure issues must initiate from ML Engineering team.

Additional Information

- Pod restarts, DL models are fine but LLM takes a long time to recover.